My music

Loosies

American Electronic

Small Guitar Pieces

Maximum R&D

Love me on Tidal!!111!

Neurogami: Dance Noise

Neurogami: Maximum R&D

New track added to Loosies - Schnee Gefallen

Stuff and not stuff

I’ve been stuck in a creative limbo since the death of my wife a few years ago.

A big part of it is that so much of my sense of self was based on my relationship with her. So much of the joy of making art of any kind came from sharing it with her. She didn’t always care for what I made, often referring to my music as “scratchy guitar” (a term I came to embrace). But when she died there was, unsurprisingly, this great void.

Read the full post ...

More Suggested Listening

I’ve added more items to my Suggested Listening page, now at about 140 possible new finds for you.

Read the full post ...

Holger Czukay Article

A little while back I wrote an article about Holger Czukay for a small zine.

The same one that ran my Holger Czukay papertoy.

I figure I should just publish it here.

Read the full post ...

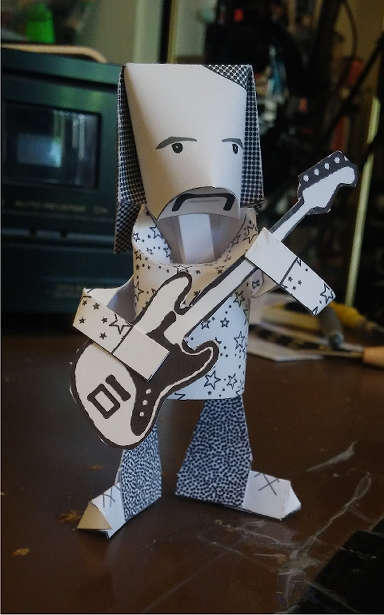

Holger Czukay Paper Toy

Some years a go I made a Holger Czukay papertoy for an obscure zine.

I decided that it should be available to more people so I’ve uploaded to this site.

You can read how to download, and how to build, the toy here.

Read the full post ...

Stuff

Some suggested listening

I've assembled a page of music by artists I think merit your consideration.